Is Data Science Evil?

What does “Don’t Be Evil” really mean?

If you like reading about philosophy, here's a free, weekly newsletter with articles just like this one: Send it to me!

Is Data Science Evil?

Computers have a long history of being associated with evilness. Machine minds without emotions suggest cruelty, unfeeling judgement, unflinching execution of inhuman orders. It’s no accident that we talk of the Nazi war machine, their death machine, their killing machine, none of which were, in the common sense of the word, machines. The 70s saw the rise of computers in the public consciousness, and, in the same move, the growing horror of what computers might do. Movies like Demon Seed pitted innocent maidens against sadistic AI machines. And although Star Wars did have androids on both sides of the black/white divide, the most impressive figure in the whole franchise is the black-clad cyborg, Darth Vader.

The evils of data science and AI

We’ve come a long way since the 70s, and computers today don’t rape or mutilate people wearing black face masks. (Although, with the virus, we’ve all become a little more Darth-Vader-like). Instead, they enable the transition to a cashless economy, from which the poorest in society will be excluded (one cannot drop a credit card into a beggar’s hat, and neither would anyone like to have their card swiped by a card reader on the street). AI systems assist judges in estimating the risk posed to society by particular offenders and decide on sentences or bail based on algorithms that are kept hidden from view.

Photo by Bill Oxford on Unsplash

AI is at the forefront of environmental destruction, not only through the use of vast amounts of energy and carbon emissions for training computationally intensive models, but also by supporting research that will open up the Arctic to commercial exploitation, by enabling fossil-fuel companies to find new sources of oil, and by being one of the industries with the shortest time-to-obsolescence of its products. AI algorithms allow us to create new, terrifying weapons, come up with new types of terrorism, manipulate democratic processes, and endanger jobs on a global scale.

A cashless society seems convenient, but it has severe drawbacks, especially for the least privileged in society.

Via the cultural imperialism of the US and its ubiquitous language, “US English (international)” (as my keyboard settings call it), AI-supported toys for children, based on Disney characters, have replaced traditional cultural content in many societies.

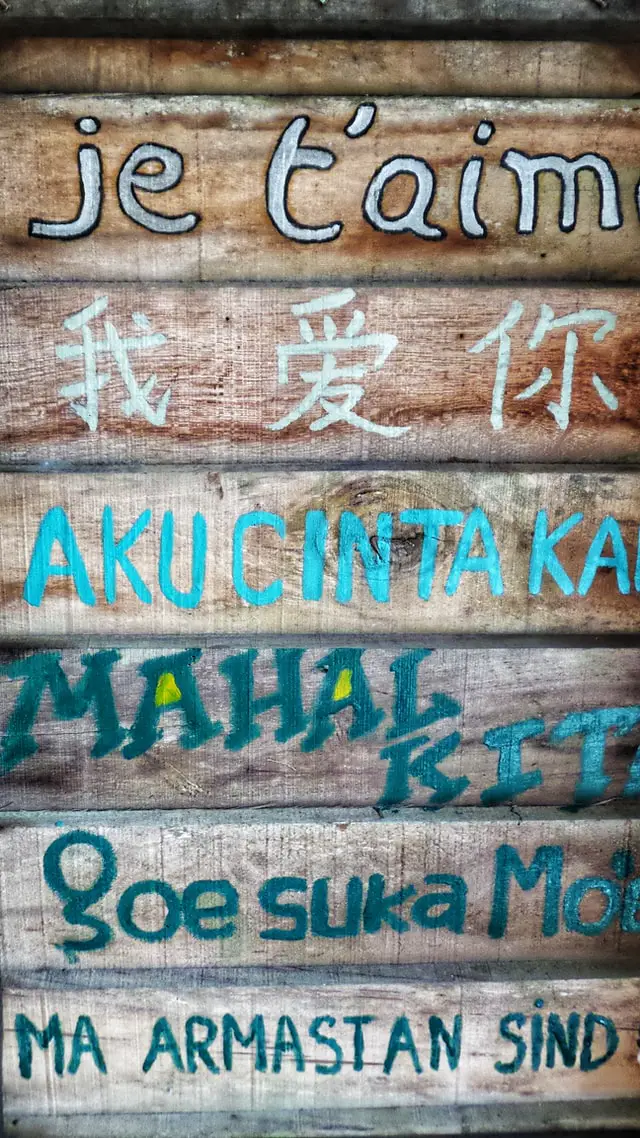

Google translate supports only 109 languages out of an estimated 6500 that are in existence.

None of the big AI products in use today support cultures and value systems other than vaguely Christian-based, Western ones. There are no Islamic rules of censorship in Facebook, but there is the North-American-minded censorship of women’s breasts. There are no Hindu restrictions on talking about cow’s meat on Twitter, but there is censorship of anti-vaxxers and global climate emergency deniers.

Not that I am a supporter of either of these groups, Western or otherwise. The point here is not to defend particular types of content, but to point out how our AI-controlled and enforced rules of public discourse have a severe cultural bias. Think of censoring the images of cows being slaughtered, or a restaurant ad showing a juicy steak. Yet, for hundreds of millions of people, such an image is as revolting, or more, than what we censor in our Western media when it touches our own sensibilities.

Photo by Hannah Wright on Unsplash

And lastly, to not let the list get too long (although it probably already is), AI causes an immense concentration of power in the hands of very few big companies that have ownership of the vast amounts of data needed to train effective models: Google, Baidu, Facebook, Twitter, Amazon, Apple, Tesla. Small companies, startups, a possible Shia-Islamic search engine, a Kazakh self-driving car, a culturally-aware South Pacific Islands social network — these are and will remain utopias, because their respective population bases are not big enough to make training AI models for them worthwhile, or even possible.

What does it mean to be evil?

It’s easy to say “Don’t be evil.” It’s very hard to know when we actually are.

Chaining a child to a table and forcing it to cut pieces of cloth all day is clearly evil. But what about buying cheap shirts from India or Bangladesh that have been produced in exactly that way? The buyers are, after all, those for whose benefit these industries are created. When the shirt, fashionably cut and attractively coloured, beckons from the retailer’s rack or the Amazon catalogue, it doesn’t come bundled with the pictures of the kids whose lives were destroyed making it. But perhaps it should.

Peter Singer’s Drowning Child thought experiment: If, on the way to the office, we saw a child drowning in a pond, would we think that we have to save it?

In a similar way, when a developer creates a program that speaks ten languages, we’d praise them for being so internationally and culturally aware. The program won’t come packaged with a list of the 6490 languages that were omitted from its menus. When someone programs a robotic toy for children based on a Disney character, we won’t immediately think of the hundreds of thousands of local myths and stories that are left out and that the children of the world won’t hear anymore, the centuries of experience and wisdom and guidance that will be lost. Perhaps the Disney doll should come with those stories attached too, in a beautifully illustrated booklet — but it won’t.

Photo by Anugrah Lohiya from Pexels

We know these things are bad. But there’s a difference. While the clothes customer can plausibly argue that it is impossible for them to know how their clothes have been produced, the AI developer or big data scientist has no such excuse. They are located right at the centre of the world they are creating, they are the ones building the very tools that will cause the loss of whole cultures, the destruction of the environment, that may put innocent people into prisons or offend millions of believers of another religion, enable fraud and terrorism, surveillance and suppression worldwide.

So, what can we do?

At least we should realise that “being good” is something different from “not being evil.” The customer who buys a cheap shirt is not really evil. The developer or data scientist who helps create an AI product that will obliterate a small culture somewhere far away is not directly evil. But they are not good, either.

Being “good,” as opposed to not being evil, means more than just not being the direct perpetrator of harm.

Creating a cashless payment system is not evil. But endorsing its widespread adoption across society, without thinking of the beggars, the poor, the elderly, the possible fraud, the additional fees imposed on everyone — this is not good.

According to many moral theories, “being good” means to be actively engaged in promoting other people’s welfare — not only their financial or material welfare, but the conditions under which they live their lives, and the possibility of their flourishing as people: of others reaching their full human potential. This thought goes back to Aristotle, and to a great extent to Kant, who has said that we should treat others as “ends in themselves” and not only as means to our own ends. And it has been forcefully argued in modern times by Amartya Sen and Martha Nussbaum.

Martha Nussbaum and the Capabilities Approach

In the capabilities approach, philosopher Martha Nussbaum argues that a human life, in order to reach its highest potential, must include a number of “capabilities” – that is, of actual possibilities that one can realise in one’s life.

In a world that is increasingly falling apart, where AI and data science will cause the loss of billions of jobs, where AI systems guard concentration camps and enable totalitarian governments to use blanket surveillance to find and eliminate their critics, where the search for fossil fuels by big data analyses is at the root of enabling even more environmental destruction, where recommendation engines and subtle online shopping psychology causes people to buy stuff that will end up in landfills only a few months later, we cannot any longer proclaim the developers and data scientists who enable all this, “innocent,” or “not evil.”

“Not evil” is not good enough any more.

Using AI for good is hard. Is it a lot harder than just “not being evil.” It’s time that we began seriously thinking about how to be good developers and scientists in a world that needs the really good people to take control, in order for the planet to survive.

Roman V. Yampolskiy: The Uncontrollability of AI

The creation of Artificial Intelligence (AI) holds great promise, but with it also comes existential risk.

◊ ◊ ◊

Thanks for reading! Cover image: Google’s headquarters. Image by “The Pancake of Heaven!”, CC BY-SA 4.0 https://creativecommons.org/licenses/by-sa/4.0, via Wikimedia Commons.